AI-orchestrated project management: the same technique, a different domain

About a year ago, AI orchestration in software development was a fringe practice. A small number of teams were using it systematically. The results were significant enough — a development department transformed in roughly four months — that it was clear this was not a productivity trick but a structural shift in how technical work could be done.

Now everyone is talking about it.

The pattern is familiar. Early adoption, visible results, a lag of twelve to eighteen months before the mainstream catches up, then rapid convergence. The organizations that moved first have a structural advantage that compounds. Those that move when the conversation becomes mainstream are catching up rather than leading.

The question worth asking now is not how to implement AI orchestration in software development. That answer exists. The question is: what comes next? And the answer is already visible to anyone who looks at the underlying technique rather than the specific domain.

What made AI orchestration work in development

The insight that unlocked AI orchestration for development teams was deceptively simple: a codebase is text.

Not metaphorically. Literally. Source files, configuration, documentation, commit history — all of it is structured text that can be placed in a context window. Once you accept that framing, the mechanics follow directly. You put the relevant parts of the codebase in context. You ask Socratic questions — not "write me a function" but "given this architecture, what are the implications of changing this interface?" You work with the LLM as a reasoning partner that has the full context rather than as a code generator working from a description.

The result was not faster typing. It was better decisions. Teams that understood the codebase deeply, found problems before they became incidents, made architectural choices with full awareness of the downstream effects. The LLM was not replacing the developer. It was giving the developer a collaborator who had read everything and could reason about all of it simultaneously.

What made it work: the raw material was text, the context window was managed deliberately, and the questions were structured to elicit reasoning rather than output.

The same raw material already exists in project management

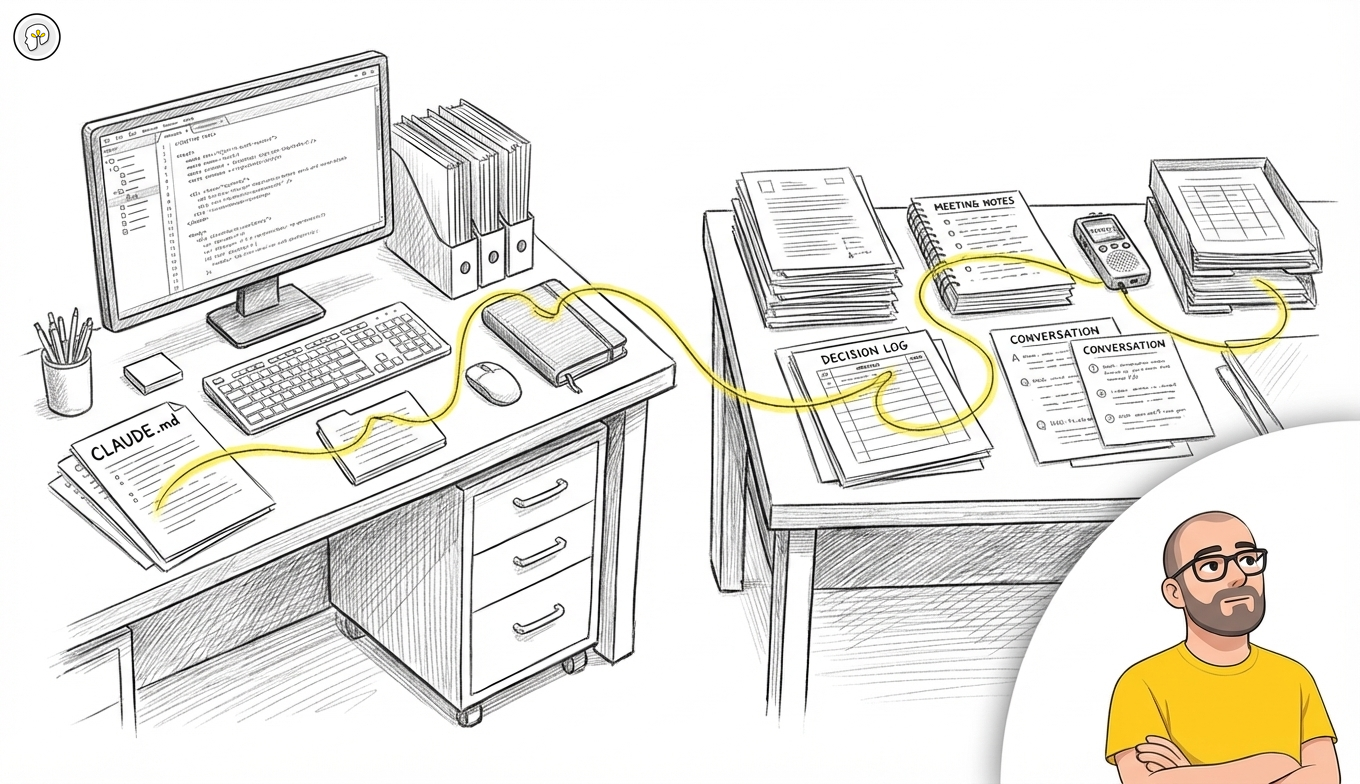

Steering documents are text. Meeting summaries are text. Decision logs are text. Email threads, Slack conversations, retrospectives, risk assessments, stakeholder updates — all text.

The raw material for AI-orchestrated project management already exists in every organization. It has always existed. The difference is that until recently there was no practical way to reason over it at the scale and speed that changes how decisions get made.

The technique that works for a codebase works for a project documentation corpus. You put the relevant documents in context — the steering document, the last three decision logs, the open risk items, the conversation thread from last week's planning session. You ask the same kind of Socratic questions: given these constraints, what is the implication of this timeline change? Which decisions made in the first phase are in tension with the approach being proposed in the third? What assumptions are embedded in this plan that have not been made explicit?

The LLM does not manage the project. It gives the project lead a collaborator who has read all the documents and can reason about the relationships between them. The same structural shift that transformed development teams applies here.

What this is not

This is not the AI features being added to project management tools. Most of those extract summaries from existing fields, generate status updates from ticket data, or automate routine notifications. That is automation of administration. It is useful and it is not what we are describing.

AI-orchestrated project management works with raw text outside of structured systems. The conversation that happened before the decision was logged. The steering document that was written six months ago and has not been formally updated but reflects assumptions that are now out of date. The retrospective notes that never made it into any system. The email thread that contains the actual reasoning behind a choice that looks arbitrary in the ticket.

The unstructured, conversational, human record of how a project is actually being run — that is the input. A good LLM, given access to that material and asked the right questions, can surface what no dashboard will show you.

The voice advantage: project work starts verbal

There is one structural difference between a codebase and a project documentation corpus that actually strengthens the case rather than weakening it.

A codebase is already text. A developer writes code, commits it, and the artifact is immediately machine-readable. Project work is largely verbal first. Decisions get made in meetings. Direction gets set in conversations. Context gets established in calls. The written record — if it exists at all — is a summary of what happened, not the raw material of the reasoning.

This is not a problem. It is the point of entry.

When you record and transcribe a meeting, you produce a raw text document that contains the actual language people used, the questions that were raised, the objections that were addressed, and the reasoning behind the outcome. That document is richer than any formal minutes. It captures what the decision log never will.

Structured AI workflows for voice — recording, transcription, targeted extraction — are already understood and already deployed in organizations using AI seriously. The same pipeline that turns a sales call into structured customer intelligence turns a project steering meeting into a structured record of constraints, decisions, and open questions. The technique is identical. The domain is different.

This means that project management AI orchestration has a raw material advantage that developers do not: the most important project conversations, once transcribed, are richer context than anything that ends up in a formal document. The informal, the verbal, the off-the-record — all of it becomes usable when you treat conversation as data.

Context window management is the skill

The same discipline that makes AI orchestration work in development makes it work in project management. The context window is finite. What you put in determines what the model can reason about. Putting everything in is not a strategy.

The skill is selection: which documents are actually relevant to this decision? Which conversations contain the context that is missing from the formal record? Which earlier decisions are in active tension with the current proposal?

This is not a technical skill. It is a knowledge work skill. The project lead who can make those selections well gets dramatically more leverage from the technique than the one who cannot. Which is exactly the pattern that emerged in development teams: the senior developers — the ones who already understood the codebase structurally — got the most leverage from AI orchestration. It amplified existing judgment rather than substituting for it.

The choice that exists right now

Organizations that started applying this technique in software development a year ago spent several months finding what worked, building the conventions, and accumulating the compounding returns of a team that has internalized a new way of working. By the time it became a mainstream conversation, they had a lead that takes time to close.

The same window exists now for project management, knowledge work, and operational decision-making. The technique is understood. The tools are available. The raw material — text, conversations, documents — is already there.

The question is the same one that faced development teams a year ago: do this now, when the advantage is available, or wait until it is a standard expectation and the advantage is gone.

The technique does not change. The timing does.

Related: The context window illusion · Voice to structured meeting documentation · Claude Cowork scheduled tasks · Track 2: Document Management