AI stops at the PR — and that's the rule

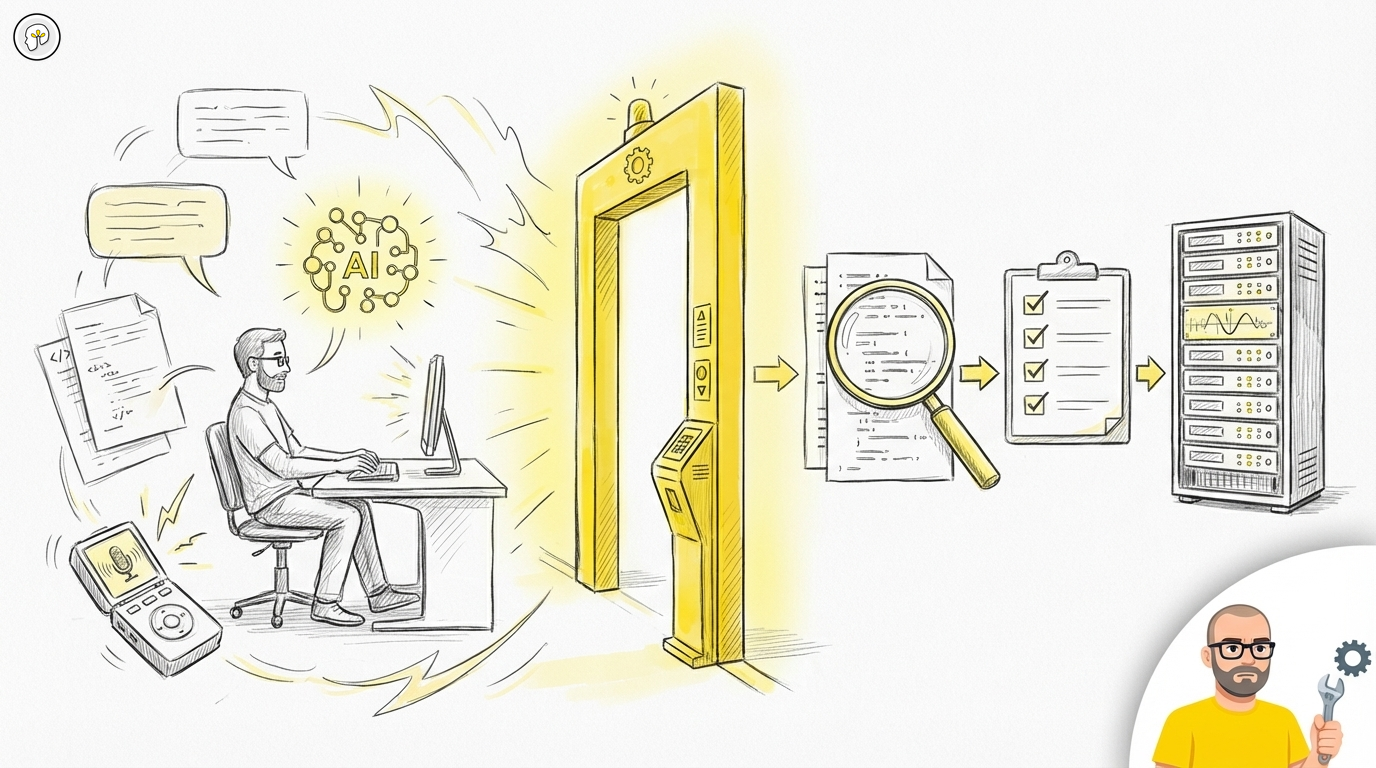

Teams arguing about how much to trust AI in their development process are usually asking the wrong question. The question isn't how much — it's until when.

One boundary resolves most of the debate: the Pull Request (PR) — the point where your code moves from your machine into your team's shared review process.

Two failure modes I keep seeing

The first failure mode is excessive caution. Teams that distrust AI refuse to use it anywhere near their codebase. They miss the productivity gains that come from freely experimenting, drafting, and iterating before anything is committed. The tool sits unused or underused because nobody agreed on when it was acceptable.

The second failure mode is excessive trust. Teams that over-rely on AI let it run through the entire pipeline — generating code, skipping review, pushing to staging, deploying to production — with humans acting as rubber stamps at best. This isn't AI-assisted development. It's abdication.

Both failures come from the same source: no clear boundary.

What the PR actually represents

A Pull Request is the moment your code leaves your machine and enters your team's shared process. It's where individual work becomes collective responsibility. Code review, automated tests, staging environments, compliance checks — these are the gates your organization decided matter.

They weren't built for AI. They were built because shipping broken code has consequences.

That doesn't change when AI writes the code. If anything, it matters more. AI produces output confidently regardless of correctness. Your quality gates are the last line of verification that what gets merged is actually what was intended.

Before the PR: full freedom

Up to the moment you open the PR, use AI however you want.

Generate first drafts. Explore three different implementations of the same function and pick the best one. Dictate your requirements out loud and let AI write the spec. Iterate on architecture decisions by asking AI to challenge your assumptions. Have it write boilerplate you'd otherwise spend an hour on.

All of that is before the gate. None of it bypasses anything.

The only condition is the one that always applied: you understand what you're committing. Not every token the AI produced — but the intent, the structure, the potential failure modes. That's not AI's job. It never was.

After the PR: gates unchanged

From the moment you open the PR, your existing process takes over. Unchanged.

Code review happens because a colleague's eye catches things that automated tools miss and that you're too close to see. Automated tests run because regressions need to be caught before they reach users. Staging environments exist because production behavior is never perfectly simulated locally. Compliance checks exist because your organization is accountable for what it ships.

AI doesn't get to skip any of that. Not because AI is untrustworthy — but because those gates aren't checkpoints for distrust. They're checkpoints for quality.

The mistake is thinking that faster AI generation means the downstream process should speed up too. It doesn't. If AI helps you arrive at the PR with better code, faster — the PR is still the PR.

Why this rule works in practice

It's specific enough to follow. Teams can answer any "should we use AI here?" question by asking: are we before or after the PR boundary? If before — go ahead. If after — the existing process applies.

It doesn't require a new policy document, a new tool, or a new governance layer. It uses the boundary your team already has.

It scales. A solo developer working on a personal project has different gates than a team shipping regulated software. The rule is the same in both cases — it just maps to whatever process you actually have.

And it creates the right accountability structure. Whoever opens the PR owns what's in it. They used AI, they reviewed it, they decided it was ready for the team's process. That's not different from writing the code themselves. The ownership is identical.

The trap: using AI to shortcut the gate itself

One pattern to watch for: using AI to generate the code review, write the test results, or draft the approval comment. That isn't AI-assisted development before the PR. That's using AI to simulate the gate rather than pass through it.

The gates exist to catch things humans miss — including the person who wrote the code. Asking AI to perform the review on the code AI generated closes a loop that was deliberately kept open.

The rule only works if the gate is real.

The PR boundary isn't a constraint on AI use. It's a clear line that makes more AI use possible — because developers know exactly where their autonomy ends and their accountability begins.

That's not a limitation. It's the design.