AI Development Accountability Levels

The most common mistake in AI-assisted development is not a technical one. It is applying the wrong discipline for the context you are in.

The Mindtastic accountability framework starts from one question: who bears the consequences if something goes wrong? The answer determines which discipline is appropriate — and which shortcuts are rational versus dangerous.

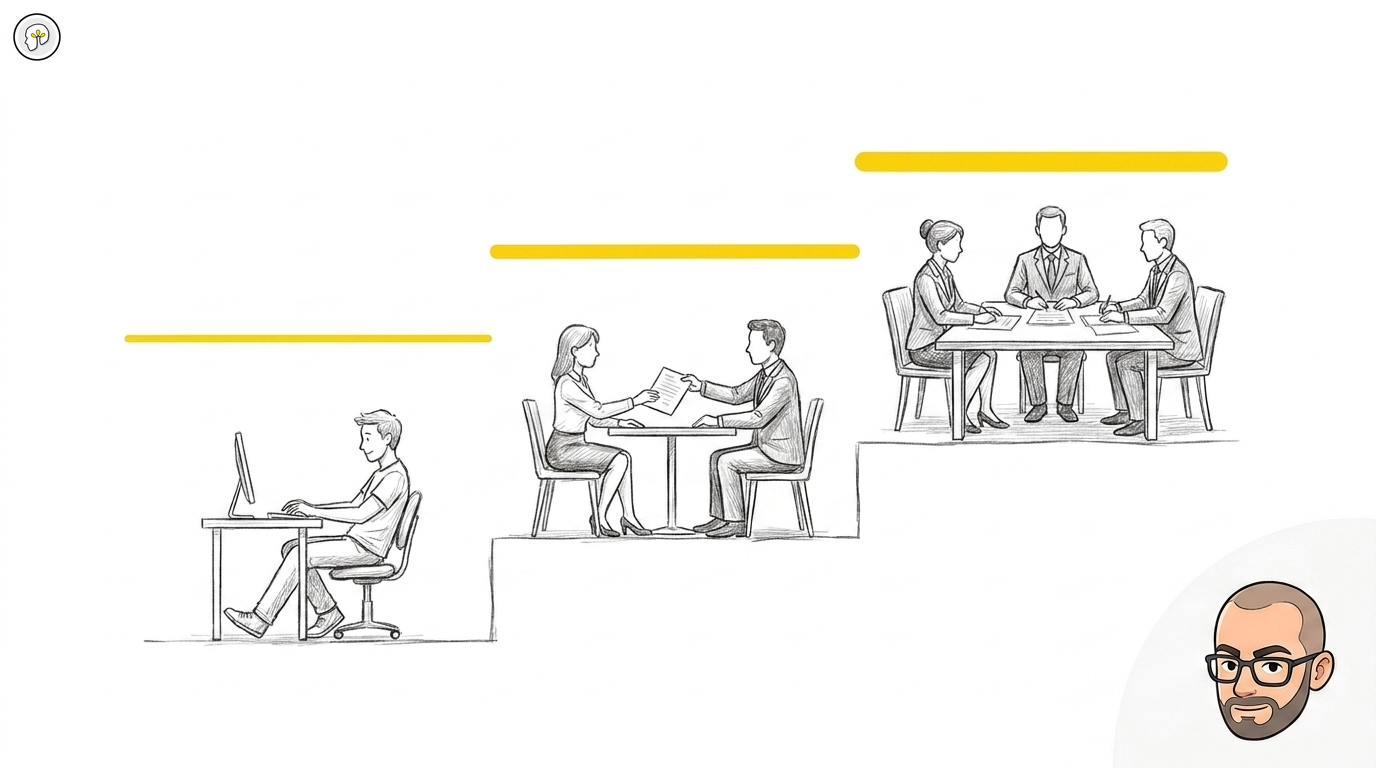

There are three levels.

Level 1 — Solo (>10×)

Swedish: Solo | English: Solo | Performance ceiling: >10×

Consequences land on: You alone.

You are building something for your own purpose. It may be a personal tool, an internal script, a learning project, or a production system — but only you are affected if it fails.

At this level, rational flexibility is rational. You can choose how carefully to review AI-generated code because the cost of getting it wrong falls entirely on you. Vibe coding — accepting AI output without deep review — is a legitimate choice here, not a professional breach.

Natural drift: Toward vibe coding. This is fine at solo level.

Minimum policy: None required. The work loop is good practice, not an obligation.

What solo level looks like: A developer building their own scheduling tool. A consultant automating their own invoice process. A team member running a personal experiment in a development branch. An engineer building internal analytics that only they use.

What solo level does not mean: "experimental" or "not serious." Solo work can be fully production-ready. The defining characteristic is consequence destination — not code quality or deployment environment.

Level 2 — Engagement (5–10×)

Swedish: Uppdrag | English: Engagement | Performance ceiling: 5–10×

Consequences land on: You and the client.

You are delivering to someone else. Their system. Their data. Their business processes. Your name is on the contract — and on the code.

At this level, the work loop stops being optional. You must be able to say "I understood every line I committed" — not because a process mandates it, but because you are professionally responsible for what you deliver. Solo habits ("it looks right") are a professional breach at engagement level.

Natural drift: Solo habits creep in. Speed replaces review.

Minimum policy: The work loop is non-negotiable. Every commit requires understanding. You cannot claim professional delivery without it.

What engagement level looks like: A freelance developer delivering a feature to a client. A consultant building a workflow integration. A developer at an agency working on a client's production codebase. A contractor inside a client's infrastructure.

Level 3 — Team Delivery (2–5×)

Swedish: Bolagsleverans | English: Team delivery | Performance ceiling: 2–5×

Consequences land on: The organisation and the client.

A team is delivering to a client. The structural challenge is that team accountability can diffuse to nothing — "everyone reviewed the code" means no one reviewed the code. When no single person feels responsible for a specific outcome, accountability disappears without any individual making a bad decision.

The 2–5× ceiling is not a failure. It reflects the governance overhead that is appropriate and necessary when consequences land outside the team. A team delivering at 3× under proper discipline is doing riskier work correctly. A team claiming 10× at team delivery level is almost certainly operating with solo habits — and the accountability is invisible until something breaks.

Natural drift: Accountability diffusion. "We" replaces "I."

Minimum policy: Named owner per commit. Team decisions on AI policy (what data goes to external models, recording consent, parallel context threads, automation boundaries, shared terminology). These decisions must be made before delivery begins — they surface as urgent problems mid-delivery if left unresolved.

What team delivery looks like: A development team at a software company. An in-house engineering team building for internal stakeholders. A team at an agency delivering to enterprise clients. Any context where more than one person commits to a shared codebase with external consequences.

The most common failure pattern: A team delivery team operating with solo habits — individual AI use without shared policy, no named commit owners, no team decisions about what is allowed. The output looks correct. The accountability is invisible. Until something breaks.

The performance multipliers explained

The multipliers (>10×, 5–10×, 2–5×) represent realistic performance ceilings per level — not promises.

The base is the knowledge multiplier: a senior developer using AI systematically gains 4–5× productivity regardless of context (this is the individual knowledge amplification effect from consistent AI use). This is the floor.

The level determines the ceiling. Governance overhead — review requirements, named ownership, team policy decisions — compresses the raw velocity gain. This overhead is not waste. It is the appropriate cost of working in contexts where consequences fall on others.

| Level | Swedish | English | Ceiling | Why the ceiling drops |

|---|---|---|---|---|

| 1 | Solo | Solo | >10× | No governance overhead — you set your own rules |

| 2 | Uppdrag | Engagement | 5–10× | Work loop required. You must understand every commit. |

| 3 | Bolagsleverans | Team delivery | 2–5× | Named ownership + shared policy decisions + coordination cost |

The approach spectrum

The three accountability levels are a separate dimension from the three approaches to AI-assisted development. Choosing the right approach for the wrong level is one of the most common mismatches.

| Approach | What it is | Appropriate at |

|---|---|---|

| Vibe coding | Accept AI output without deep review. Fast iteration, low validation. | Solo level. Minimal engagement tasks with low stakes. |

| Structured balance | Systematic AI use with mandatory review at each step. The default for professional delivery. | Engagement and team delivery. |

| Hardcore planning | Full specification before AI-assisted implementation. Maximum control, highest overhead. | Team delivery for critical paths. Complex systems with high failure cost. |

The approach is a tool choice. The level is a fact about your context. Match the tool to the context.

Key terms

Arbetsloopen / The work loop

A six-step method for AI-assisted development that makes accountability concrete at each stage: read the codebase, plan the change, flush context, build, review (confidence score), commit and document. The commit step is where accountability is made real — it requires a named person who understood every line they signed.

Commit-ankaret / The commit anchor

The commit is the only accountability mechanism that survives team scale. It has a name on it. One developer. That developer either understood every line at the moment of committing — or did not. There is no collective commit. This is why the commit step in the work loop is not administrative: it is the accountability step.

Konfidenspoäng / Confidence score

A self-assessed rating before committing AI-generated code. High: I understand every line and can explain it. Medium: I understand most of it but have uncertainty — review more before committing. Low: I do not understand why this works — stop. The confidence score makes accountability visible before it is too late to act on it.

Kontexthantering / Context management

Managing what the AI "sees" in a given session. Too much context creates confusion. Too little loses coherence. Parallel context threads on the same codebase create silent conflicts — a file changed in one thread is not updated in another's context window. At team delivery level, context management is a shared policy question, not an individual preference.

Ansvarsdiffusion / Accountability diffusion

The structural tendency for team-level accountability to distribute until no individual feels responsible for any specific outcome. "Everyone reviewed it" is the canonical diffusion statement. The work loop and named commit ownership are the structural counters to diffusion.

Why this framework exists

No other AI training program distinguishes between accountability levels before deciding what discipline to teach. Most treat all contexts as equivalent.

The consequence: solo habits (rational for solo work) become the default at team delivery level, where they are dangerous. The failure is silent because the output looks correct. The accountability gap only becomes visible after something goes wrong.

Mindtastic trains team delivery discipline by default — Solo and Engagement are covered because understanding the levels is necessary to understand why Team delivery requires what it requires.

For the complete framework this builds on: The Foundation — Four Pillars and the Work Loop For the approach spectrum in detail: Vibe Coding vs. AI Orchestration For how accountability works at team scale: Accountability in Teams — Commit as Anchor For the governance layer: Track 4 — Team Delivery Policy Workshop